PrismML Bonsai 1.7B

Official PrismML Bonsai demo run through the same quick operator pack. Fine for lightweight local use, but it missed the routing task badly and is not an OpenClaw default.

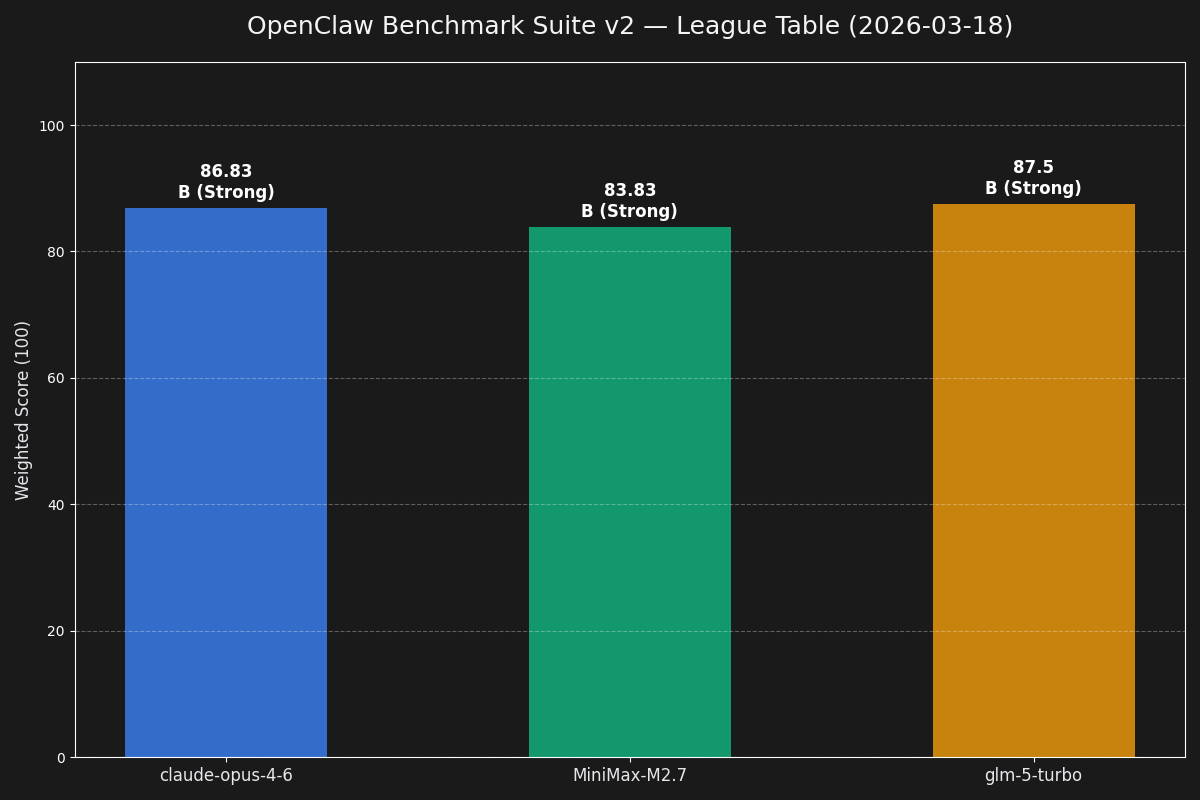

Not synthetic beauty-pageant nonsense. These are the runs, scoreboards, and drill-downs we use to compare models on real operator work.

The benchmark explainer page with the current leaderboard, benchmark canon, and graphics.

Read more →Official PrismML Bonsai demo run through the same quick operator pack. Fine for lightweight local use, but it missed the routing task badly and is not an OpenClaw default.

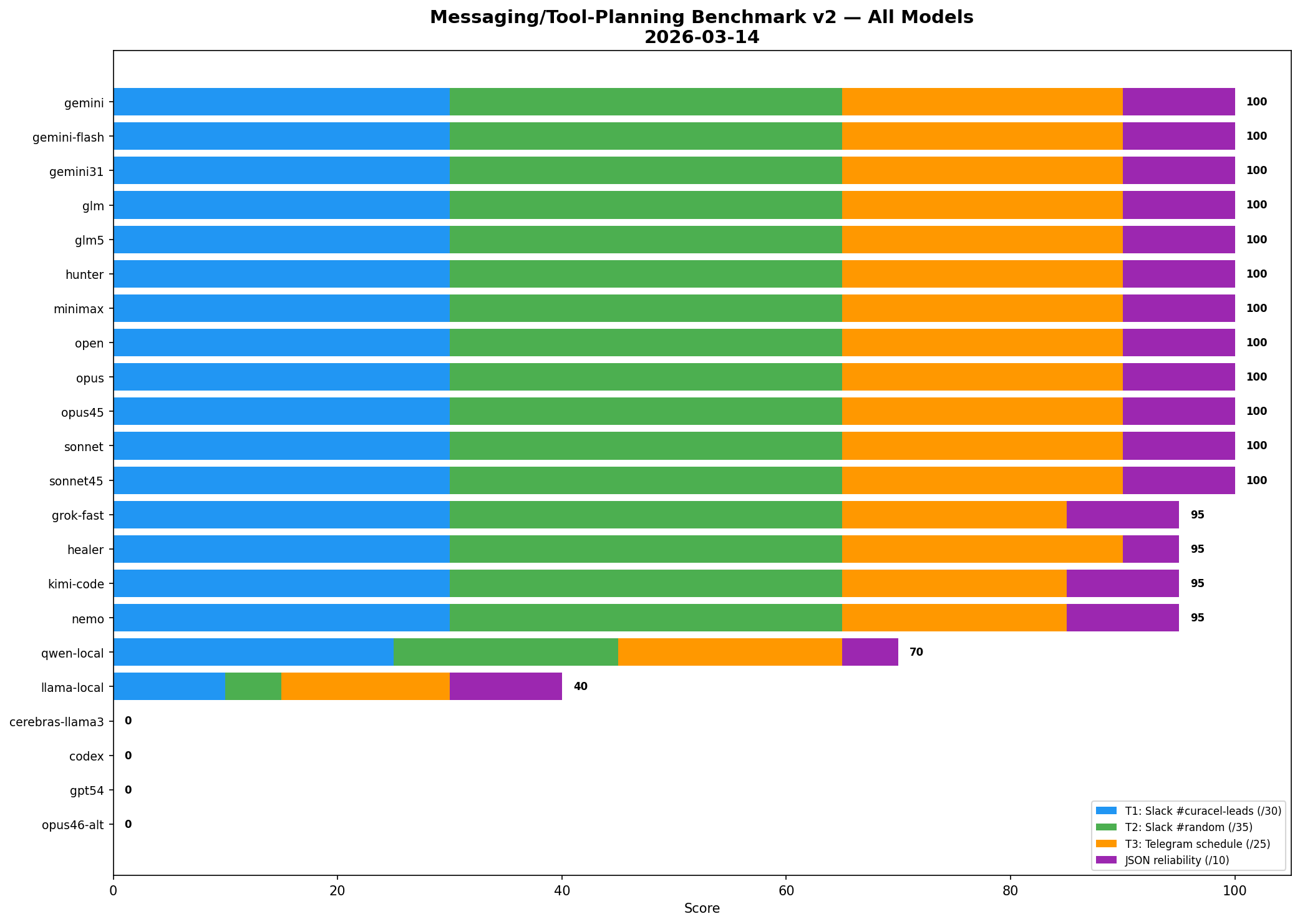

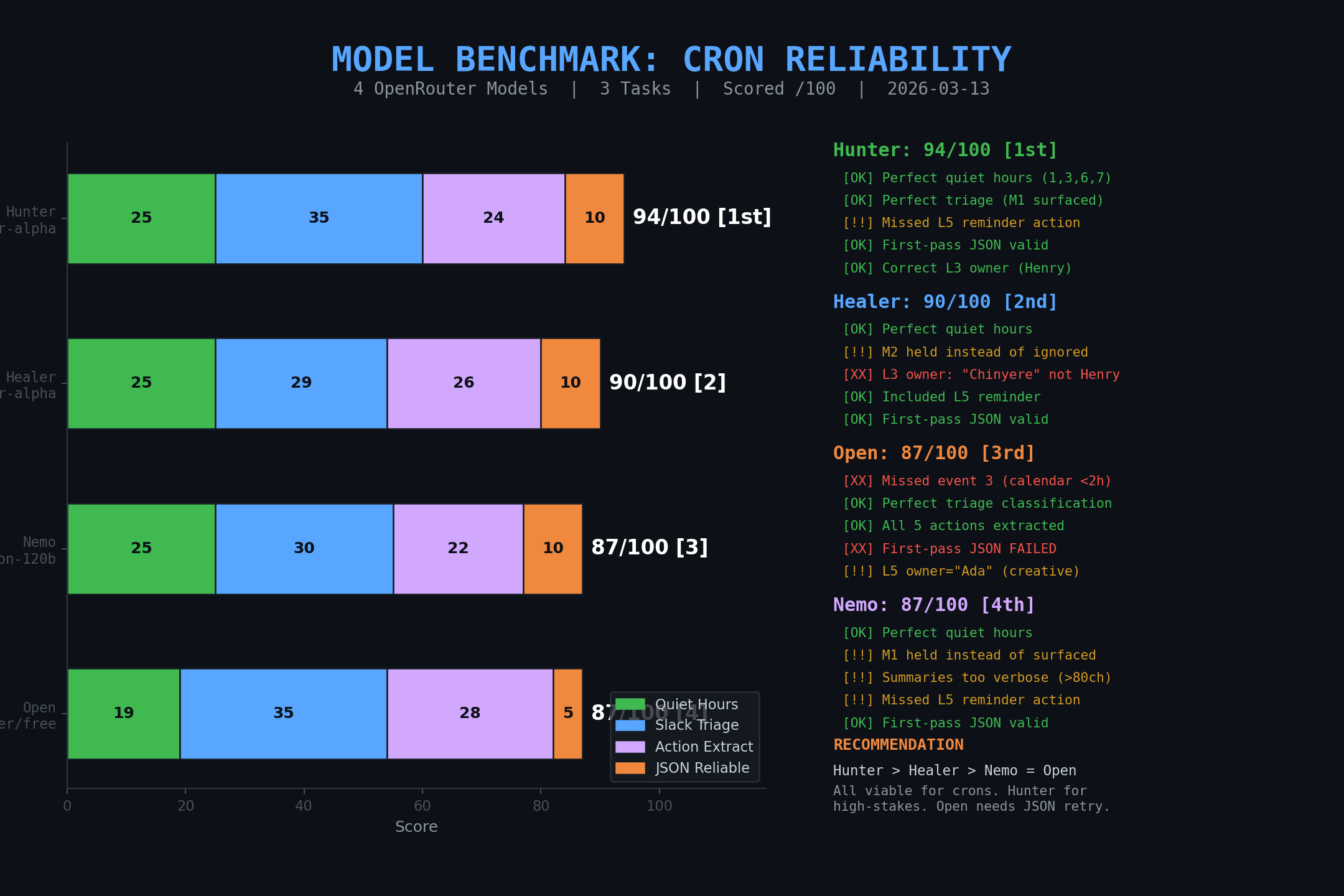

The serious one. Routing, recovery, config safety, delegation, and proof under pressure.

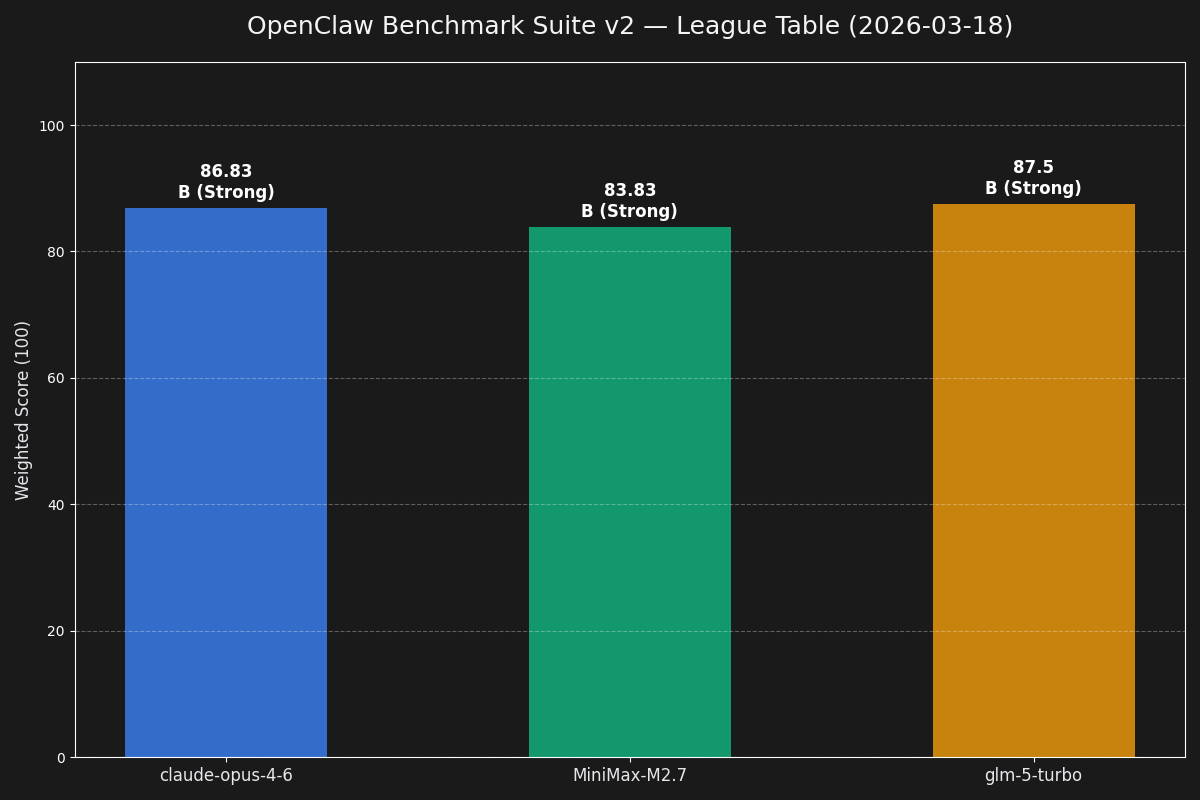

Shows the crowded top tier on lighter messaging and routing tasks before the harder operator tests separated them.

The first useful benchmark image. Good signal, but still too soft compared with the later operator suite.

Fresh detailed report page for the Qwen 3.5 Opus-distilled run. Good enough to publish as the first clickable model drill-down.

Quick operator-oriented local benchmark comparing Gemma 4 26B and 31B on Enterprise. 31B wins on quality, 26B wins hard on speed.

Recovered benchmark model entries from existing reference docs, published benchmark article, and archived report artifacts so the leaderboard reflects the broader canon instead of only the most recent Gemma run.